The defence and security environment is changing globally over the years in a way that have never been seen before. What we are seeing is that the world has entered an era of intense competition in almost all spheres including geopolitical, economic, military, technology and industry. On one hand, competition is helpful as it speeds up innovation and development. On the other hand, when it becomes unmanageable and employs unfair means it also multiplies our security vulnerabilities and may trigger future conflicts.

The competition is evident even during the current COVID-19, pandemic, which has claimed millions of lives with the U.S. blaming China for the disease while Beijing tries to win friends through “vaccine diplomacy” and offering aid to affected countries. There is also concern that current situation will be exploited for political, economic or military gains. For example, Media outlets have linked the coronavirus outbreak to Biowarfare programs.

Now consider global threat such as climate change, that continues to cause frequent extreme weather events, such as heatwaves, droughts, floods, as well as disease outbreaks, food, and water security. The race to mitigate climate change has given to renewable energy race among countries including United States, China, and Europe to cut fossil fuels, boost clean energy — and transform their economies in the process.

Infrastructure development has become another competition led by China which is promoting the Belt and Road Initiative or BRI to expand and secure its economic, political, and military advantages. BRI comprises of land route called Silk Road Economic Belt which will link China with Europe through Central and Western Asia, and the sea route called Maritime Silk Road which will connect China with Southeast Asian countries, Africa and Europe. The recent initiative of Build Back Better World (B3W) of G7 led by the US is now planning to compete with the Chinese Belt and Road.

More and More physical spaces are now becoming militarized from deep oceans to the Arctic to Cislunar space up to the moon and technologies are being developed from surveillance, platforms, propulsion, materials to weapons for dominating these domains. Again, one of the chief motives is resource competition. For example, It is estimated that there are trillions of dollars worth of minerals and metals buried in asteroids that come close to the Earth hence there is race for asteroid mining. Similarly, as Global warming is melting the Arctic ice, it is opening up new shipping trade routes, and intense resource competition has started over an estimated $1 trillion untapped reserves of oil, natural gas and minerals.

Technology forecasting requirements

The competition has also become intense in emerging technologies, US, China, and Russia are spending billions of dollars to assume Global leadership in technologies such as AI, robotics, quantum to secure a future economic and military advantage leading to a vigorous arms race in emerging technologies.

AI is projected to create 11 trillion dollars of economic value in the next decade. In Defense and Security AI is emerging as the biggest multiplier and being embedded in every military technology, platform, system, and Network from Soldiers to the entire Military enterprise and making them smart and Intelligent.

Similarly, the total market for quantum technologies (computing, cryptography, sensing) will grow to US$2.9 billion in 2030. Quantum technologies will lead to major advances in precision timing, sensors, and computation, destined to have a major impact on the defense, aerospace, energy, infrastructure, and telecommunications sectors. The race is to develop the first large-scale programmable quantum computer that will bestow enormous economic, and military, advantages to the winner.

The competition is also intense in Synthetic Biology, predicted to transform Defense and Security by providing on-demand bio-production of novel drugs, materials, food, fuels, sensors, and coatings. New genome editing techniques may allow scientists to harness organisms or biological systems as weapons or build super soldiers of tomorrow

There is also an innovation race both in quantum and speed of innovation to exploit these technologies to develop military systems at the earliest through various strategies such as civil-military integration.

Commercial and military innovation also depends on manufacturing technologies and there is an emerging race in manufacturing technologies such as additive manufacturing or 3D printing. 3D printing is an ongoing revolution in manufacturing with its potential to fabricate any complex object and is being utilized from aerospace components to human organs, textiles, metals, buildings, and even food. The new digital engineering methods are being used to accelerate the development of military systems and weapons.

Another Worldwide Race is for dominating the 4th industrial revolution, or Industry 4.0 between Germany, the US, Japan, and China. It will lead to ‘Smart Factories’, predicted to have a great effect on global economies, with estimated annual efficient gains in the production of between 6% and 8%. For militaries, it will also lead to dominance in aerospace and defense industrial base.

Looking into the future, AI is enabling the next revolution in the military to “intelligentized warfare” in which there will be AI Versus AI, we will have to attack adversary AI systems and protect our own systems. Warfare has also entered the neuro-cognitive domain and countries are developing defensive and offensive capabilities to control or manipulate the minds of soldiers and commanders.

An important challenge for the Department of Defense (DOD) science and technology (S&T) programs is to avoid technological surprise resulting from the exponential increase in the pace of discovery and change in S&T worldwide. The nature of the military threat is also changing, resulting in new military requirements, some of which can be met by technology.

Proper shaping of the S&T portfolio requires predicting and matching these two factors well into the future. Some examples of technologies which have radically affected the battlefield include the Global Positioning System coupled with inexpensive hand held receivers, the microprocessor revolution which has placed the power of the Internet and satellite communications into the hands of soldiers in the field, new sensing capabilities such as night vision, the use of unmanned vehicles, and composite materials for armor and armaments. Some of these new technologies came from military S&T, some from commercial developments and still others from a synthesis of the two sectors; but all were based on advances in the underlying sciences. Clearly, leaders and planners in military S&T must keep abreast of such developments and look ahead as best they can.

Technology forecasting is increasingly recognized for its value in both commercial and government endeavors. Worldwide, at least 23 organizations perform technology forecasting (Lerner et al. 2015) as their major product for external customers. In addition, virtually every organization engages in some form of forecasting for internal purposes, implicitly or explicitly, in order to plan its activities. The reasons such forecasts are valuable to a military establishment, a military procurement institution uses technology forecasts to determine what system development and procurement efforts should be undertaken (and funded) to optimize the future value of the resulting technology to those who would have to fight in a future war. If the forecast is wrong, the military may end up fighting an enemy who possesses weapons of superior technology (Van Creveld 2010), not unlike a business that relies on a technology forecast to avoid falling behind its competitors.

Technology Forecasting

While most technology forecasts (performed for business purposes) are short term (the horizon is 1–5 years), the technology forecasts for the military in many cases (although there are many important exceptions) is either mid-term (6–10 years) or long term (11–30 years). The necessity of long-term forecasts in the world of military technology is dictated by long periods of time required for full development of some types of complex military systems. Unfortunately, it has become common for a major defense acquisition program to take on the order of two decades from concept development to initial operating capability. Even before that, it takes another 10 or more years to develop the necessary foundational science. That’s why a 20 year or longer horizon is often important for military technology forecasts for the purposes of military technology development and procurement, particularly in critical management decisions on allocating funds to long-term research topics.

What changes are likely in military technology over the next 20 years? This question is fascinating on its own terms. More importantly, answering it is crucial for making appropriate changes in U.S. and allied weaponry, military operations, wartime preparations, and defense budget priorities. To be sure, technology is advancing fast in many realms. Defense resource decisions need to be based on concrete analysis that breaks down the categories of major military technological invention and innovation one by one and examines each. Presumably, those areas where things are changing fastest may warrant the most investment, as well as the most creative thinking about how to modify tactics and operational plans to exploit new opportunities (and mitigate new vulnerabilities that adversaries may develop as a result of these same likely advances).

Technology forecasting attempts to predict the future characteristics of useful technological machines, procedures or techniques. Researchers create technology forecasts based on past experience and current technological developments. Like other forecasts, technology forecasting can be helpful for both public and private organizations to make smart decisions. By analyzing future opportunities and threats, the forecaster can improve decisions in order to achieve maximum benefit. Failure to forecast a technology (false negative) is just as serious as an incorrect forecast that a technology would appear. If a country fails to anticipate a critical military technology and fails to make appropriate investments, it may face a catastrophic surprise at the hands of an adversary who did acquire the technology.

The purpose of technological forecasting do not necessarily need to predict the precise form technology will take in a given application at some specific future date. Like any other forecasts, their purpose is simply to help evaluate the probability and significance of various possible future developments so that managers can make better decisions.

There are several traditional metrics used to track and monitor emerging technologies: (From Semiliterate)

- Patent applications (who is inventing new things?)

- Publications (who is writing journal articles? where are they?)

- Mergers + Acquisitions (what emerging startups do incumbent companies think present threats/opportunities?)

- Venture Capital (where does the “smart” money go?)

- Public R&D + Regulatory Interest (what is the government betting on?)

- Hobbyist interest (how enthusiastic about an idea are people on Reddit etc.?)

There are also several traditional metrics used to evaluate emerging technologies:

- Technology readiness level (determine technology maturity/viability)

- Potential for growth (is there a market for the technology?)

- Potential for adoption (will people care about the technology?)

- Speed of growth/adoption (is the technology poised to become exponentially more popular?)

- Criticality (does being first confer asymmetric advantage, is winning zero-sum?)

All of these metrics are useful, but some of them are paywalled (M&A activity databases), some of them are too technical for the lay observer (patent databases), some of them are qualitative (criticality) and some of them require collaborative work (TRL assessments). For individuals interested in tracking emerging trends in the semiconductor industry, an eye for synthesizing seemingly idiosyncratic developments is essential. That is what this post is about.

A Technology Readiness Assessment (TRA) is a systematic, metrics-based process that assesses the maturity of, and the risk associated with, critical technologies to be used in Major Defense Acquisition Programs (MDAPs). The TRA frequently uses a maturity scale—technology readiness levels (TRLs)—that is ordered according to the characteristics of the demonstration or testing environment under which a given technology was tested at defined points in time. The scale consists of nine levels, each one requiring the technology to be demonstrated in incrementally higher levels of fidelity in terms of its form, the level of integration with other parts of the system, and its operating environment than the previous, until the final level where the actual operation of the technology is in its final form and proven through successful mission operations. The TRA evaluates CTs at specific points in time for integration into a larger system. In general, TRLs are measured along a 1-9 scale, starting with level 1 paper studies of the basic concept, moving to laboratory demonstrations around level 4, and ending at level 9, where the technology is tested and proven, integrated into a product, and successfully operated in its intended environment.

Experts recommend that the highest TRL level 9, which calls for proof of the system through successful mission operations is not required because opportunities to perform mission operations are a function of politico-military circumstances, not of a technology intrinsically. Therefore system prototype is demonstrated at TRL 8 is accepted as a proof-of-existence of a technology. The technology is also considered as “available” if it is offered for sale commercially or if it is successfully fielded at least on an experimental basis.

In military domain technologies can be grouped into many categories. A few of the categories are as follows:

- Line-of-Sight Effects: weapons and other systems that unleash effects at the enemy targets directly visible from the “shooter”

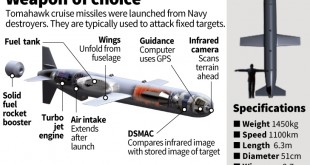

- Non-Line-of-Sight Effects: weapons and other systems that send munitions or other effects at targets that are not directly visible

- Protection: systems used to defeat or mitigate the effects imposed by the enemy on friendly targets

- Platforms: systems that move warriors and systems to and around the battlefield

- Cyber and Electronic Warfare: systems used to interfere with communication, processing, and storage of enemy’s information

- Sensing and Information Collection: systems for obtaining information about the enemy and friendly assets

- Command and Control: systems for interpreting the battlefield information, and making and communicating the decisions on actions

Technology Convergence

Experts recommend technology convergence concept that is two-fold: first, list the outcomes and associated capabilities desired by the Army at a future time point, and then look for confluences or convergences of individual sciences and technologies that would enable the realization of such capabilities. This would be achieved through forming clusters of sciences and technologies judged to be likely sources of such convergences and forecasting the evolution of these component topics on a common timeline. A resulting roadmap for each cluster would highlight where convergences of matured subjects were likely to occur and, therefore, where the capabilities and outcomes would be realized.

Early convergences led to the invention of radar, which arose from the application of electromagnetic radiation (science) to how it interacts with materials, culminating in the development of microwave generators, transmitters, and power supplies among other

devices (technology). The technology so heavily employed today in wireless handheld devices coupled with Internet access dates back to technological advances in the science of telephony and advances in solid-state physics. These advances have provided processes for developing the fiber optics and computer technology enabling broadband network service throughout the world.

Convergences in S&T also occurred within the life sciences disciplines. Most notably this occurred early on in 1953 via the discovery of DNA’s double helix structure by Watson and Crick. A confluence of organic chemistry, physics, genomics, and information technology further provided the ability to amplify and replicate the DNA molecule in mass quantities, leading to advances in protein sequencing and synthesis in the 1980s. These initial convergences led to such advances as the sequencing of the human genome. Further innovations in information technology (IT) made possible a deeper understanding of the workings of human gene interactions, from which new fields of science have emerged such as genomics, proteomics, transcriptomics, and metabolomics. Modern medicine bases many of its practices on information resulting from this process of continuous convergences, making a strong case for an emphasis on forecasting further S&T convergences.

The job of technology forecasting has changed dramatically over the past few decades, thanks largely to the exponential growth of computing power. For example, a recent paper from the Northwestern University Institute on Complex Systems describes how artificial intelligence can help predict which new scientific studies will be most reproducible.

Identifying emerging technologies that are likely to have high impact in the short & midterm defence and security affairs and support their development

US DOD

The military’s No. 2 officer, Gen. John Hyten, wants more from the defense industrial base, saying contractors need to deliver more timely capabilities that offer “integrated deterrence.” Hyten is not the only high-ranking officer to ask industry to focus on new tech. Adm. Michael Gilday, chief of naval operations, recently told industry to stop lobbying Congress to require the military to buy old platforms he says it doesn’t need.

“Although it’s in industry’s best interest … building the ships that you want to build, lagging on repairs to ships and submarines, lobbying Congress to buy aircraft that we don’t need … it’s not helpful,” Gilday said at the Navy League’s Sea-Air-Space conference . “It really isn’t in a budget-constrained environment.” Gilday later added what he does want to see form industry, not just what they should not do: “Industry can help pivot to new technologies and new platforms.”

Hyten said in order to get industry up to speed on the new direction the military wants to experiment in, the Joint Requirements Oversight Council (JROC) will be hosting an industry day where leaders will brief contractors on the Joint Warfighting Concept (JWC). The JROC sets requirements for the military’s platforms and weapon systems and has been working on requirements for more data sharing that services will need to implement in their acquisitions.

The JWC has four pillars that all revolve around having weapon systems that can connect and share data with one another in order to converge their capabilities. They include long rang precision fires, Joint All Domain Command and Control (JADC2), contested logistics and information advantage. All four are predicated on the ability to share data and integrate capabilities, like with JADC2 where all sensors would be able to connect and use artificial intelligence to analyze data coming from a battlefield like a military internet-of-things.

U.S. Army Futures Command

U.S. Army Futures Command aims to bring future capabilities to a force the U.S. government wants to be able to fight and win against near-peer adversaries across multiple war-fighting domains. Those capabilities include long-range precision fires, next-generation combat vehicles, future vertical lift aircraft, a new battlefield network, air and missile defense, soldier lethality systems, and a synthetic training environment.

Gen. Mike Murray has stressed that the Army must interface more with academia and nontraditional businesses, not just to brainstorm future capabilities but also to collaborate on development efforts. He created the Army Applications Laboratory — which has set up several cohorts to work on specific problems soldiers must solve if battlefield operations are to improve — and the Army Software Factory — set up to establish a force capable of coding at the tactical edge, as weapon systems are expected to increasingly rely on embedded autonomous and artificial intelligence technology.

U.S. Army Futures Command employing technology scientists and watchers to forecast trends and help prepare for war in coming decades. Its forecasts, informed by data and machine learning, are intended to help the Army arm, organize, and train itself for conflicts around 2040 to 2050. Ignite scans the technological horizon for upcoming advances in electronics, artificial intelligence, space, biotech, and more. Its forecasts, informed by data and machine learning, are intended to help the Army arm, organize, and train itself for conflicts around 2040 to 2050. the role of the scientists is to survey the tech landscape, see how some breakthroughs will lead to others, and then create scenarios and concepts for how those technologies will shape not just what the Army does but what adversaries might do as well.

International Defense Security & Technology Your trusted Source for News, Research and Analysis

International Defense Security & Technology Your trusted Source for News, Research and Analysis