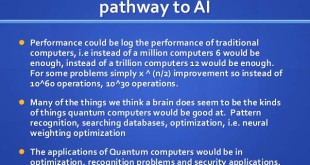

Quantum chips perform computations using quantum bits, called “qubits,” that can represent the two states corresponding to classic binary bits — a 0 or 1 — or a “quantum superposition” of both states simultaneously. The unique superposition state can enable quantum computers to solve problems that are practically impossible for classical computers, potentially spurring breakthroughs in material design, drug discovery, and machine learning, among other applications.

John Preskill, a theoretical physicist at Caltech, coined the term “quantum supremacy,” as the point at which a quantum computer can do calculations beyond the reach of today’s fastest supercomputers. Full-scale quantum computers will require millions of qubits, which isn’t yet feasible. In order for a quantum processor to be able to run algorithms beyond the scope of classical simulations, it requires not only a large number of qubits. Crucially, the processor must also have low error rates on readout and logical operations, such as single and two-qubit gates.

In the past few years, researchers have started developing “Noisy Intermediate Scale Quantum” (NISQ) chips, which contain around 50 to 100 qubits. John Preskill, had published a paper in which he said quantum computing was about to enter a phase he called NISQ, or “noisy intermediate stage quantum,” where machines will have 50 to a few hundred qubits. That’s just enough to demonstrate “quantum advantage,” meaning the NISQ chip can solve certain algorithms that are intractable for classical computers. “‘Noisy,’” he wrote, “means that we’ll have imperfect control over those qubits; the noise will place serious limitations on what quantum devices can achieve in the near term.”

The current focus of experiments, aiming to realize scalable quantum computation, is to demonstrate a quantum computational advantage. In other words, this means performing aquantum computation in order to solve a problem which is proven to be classically intractable, based on plausible complexity-theoretic assumptions. Examples of such problems, suitable for near-term experiments, include boson sampling, instantaneous quantum polynomial time (IQP) computations and others.

However, Quantum computers promise to efficiently solve not only problems believed to be intractable for classical computers, but also problems for which verifying the solution is also considered intractable. Verifying that the chips performed operations as expected, however, can be very inefficient. The chip’s outputs can look entirely random, so it takes a long time to simulate steps to determine if everything went according to plan.

This raises the question of how one can check whether quantum computers are indeed producing correct results. This task, known as quantum verification, has been highlighted as a significant challenge on the road to scalable quantum computing technology.

Aharonov and Vazirani have argued that although many of the predictions of quantum mechanics have been experimentally verified to a remarkable precision, all of them involved systems of low complexity. In other words, they involved few particles or few degrees of freedom for the quantum mechanical system. But the same technique of “predict and verify” would quickly become infeasible for systems of even a few hundred interacting particles due to the exponential overhead in classically simulating quantum systems. And so what if, they ask, the predictions of quantum mechanics start to differ significantly from the real world in the high complexity regime? How would we be able to check this? Thus, the fundamental question is whether there exists a verification procedure for quantum mechanical predictions which is efficient for arbitrarily large systems.

Researchers have proposed many significant approaches to quantum verification which are vastly different in terms of structure, complexity and required resources. The use of cryptographic techniques which, for many of the presented protocols, has proven extremely useful in performing verification.

A new method determines whether circuits are accurately executing complex operations that classical computers can’t tackle.

In a step toward practical quantum computing, researchers from MIT, Google, and elsewhere have designed a system that can verify when quantum chips have accurately performed complex computations that classical computers can’t.

In a paper published today in Nature Physics, the researchers describe a novel protocol to efficiently verify that an NISQ chip has performed all the right quantum operations. They validated their protocol on a notoriously difficult quantum problem running on custom quantum photonic chip.

“As rapid advances in industry and academia bring us to the cusp of quantum machines that can outperform classical machines, the task of quantum verification becomes time critical,” says first author Jacques Carolan, a postdoc in the Department of Electrical Engineering and Computer Science (EECS) and the Research Laboratory of Electronics (RLE). “Our technique provides an important tool for verifying a broad class of quantum systems. Because if I invest billions of dollars to build a quantum chip, it sure better do something interesting.”

Joining Carolan on the paper are researchers from EECS and RLE at MIT, as well from the Google Quantum AI Laboratory, Elenion Technologies, Lightmatter, and Zapata Computing.

Divide and conquer

The researchers’ work essentially traces an output quantum state generated by the quantum circuit back to a known input state. Doing so reveals which circuit operations were performed on the input to produce the output. Those operations should always match what researchers programmed. If not, the researchers can use the information to pinpoint where things went wrong on the chip.

At the core of the new protocol, called “Variational Quantum Unsampling,” lies a “divide and conquer” approach, Carolan says, that breaks the output quantum state into chunks. “Instead of doing the whole thing in one shot, which takes a very long time, we do this unscrambling layer by layer. This allows us to break the problem up to tackle it in a more efficient way,” Carolan says.

For this, the researchers took inspiration from neural networks — which solve problems through many layers of computation — to build a novel “quantum neural network” (QNN), where each layer represents a set of quantum operations.

To run the QNN, they used traditional silicon fabrication techniques to build a 2-by-5-millimeter NISQ chip with more than 170 control parameters — tunable circuit components that make manipulating the photon path easier. Pairs of photons are generated at specific wavelengths from an external component and injected into the chip. The photons travel through the chip’s phase shifters — which change the path of the photons — interfering with each other. This produces a random quantum output state — which represents what would happen during computation. The output is measured by an array of external photodetector sensors.

That output is sent to the QNN. The first layer uses complex optimization techniques to dig through the noisy output to pinpoint the signature of a single photon among all those scrambled together. Then, it “unscrambles” that single photon from the group to identify what circuit operations return it to its known input state. Those operations should match exactly the circuit’s specific design for the task. All subsequent layers do the same computation — removing from the equation any previously unscrambled photons — until all photons are unscrambled.

As an example, say the input state of qubits fed into the processor was all zeroes. The NISQ chip executes a bunch of operations on the qubits to generate a massive, seemingly randomly changing number as output. (An output number will constantly be changing as it’s in a quantum superposition.) The QNN selects chunks of that massive number. Then, layer by layer, it determines which operations revert each qubit back down to its input state of zero. If any operations are different from the original planned operations, then something has gone awry. Researchers can inspect any mismatches between the expected output to input states, and use that information to tweak the circuit design.

Boson “unsampling”

In experiments, the team successfully ran a popular computational task used to demonstrate quantum advantage, called “boson sampling,” which is usually performed on photonic chips. In this exercise, phase shifters and other optical components will manipulate and convert a set of input photons into a different quantum superposition of output photons. Ultimately, the task is to calculate the probability that a certain input state will match a certain output state. That will essentially be a sample from some probability distribution.

But it’s nearly impossible for classical computers to compute those samples, due to the unpredictable behavior of photons. It’s been theorized that NISQ chips can compute them fairly quickly. Until now, however, there’s been no way to verify that quickly and easily, because of the complexity involved with the NISQ operations and the task itself.

“The very same properties which give these chips quantum computational power makes them nearly impossible to verify,” Carolan says. In experiments, the researchers were able to “unsample” two photons that had run through the boson sampling problem on their custom NISQ chip — and in a fraction of time it would take traditional verification approaches.

“This is an excellent paper that employs a nonlinear quantum neural network to learn the unknown unitary operation performed by a black box,” says Stefano Pirandola, a professor of computer science who specializes in quantum technologies at the University of York. “It is clear that this scheme could be very useful to verify the actual gates that are performed by a quantum circuit — [for example] by a NISQ processor. From this point of view, the scheme serves as an important benchmarking tool for future quantum engineers. The idea was remarkably implemented on a photonic quantum chip.”

While the method was designed for quantum verification purposes, it could also help capture useful physical properties, Carolan says. For instance, certain molecules when excited will vibrate, then emit photons based on these vibrations. By injecting these photons into a photonic chip, Carolan says, the unscrambling technique could be used to discover information about the quantum dynamics of those molecules to aid in bioengineering molecular design. It could also be used to unscramble photons carrying quantum information that have accumulated noise by passing through turbulent spaces or materials.

“The dream is to apply this to interesting problems in the physical world,” Carolan says.

References and Resources also include:

http://news.mit.edu/2020/verify-quantum-chips-computing-0113

International Defense Security & Technology Your trusted Source for News, Research and Analysis

International Defense Security & Technology Your trusted Source for News, Research and Analysis