‘The development of a “quantum computer” is one of the outstanding technological challenges of the 21st century. A quantum computer is a machine that processes information according to the rules of quantum physics, which govern the behaviour of microscopic particles at the scale of atoms and smaller. Quantum computers gain their power from the special rules that govern qubits. Unlike classical bits, which have a value of either 0 or 1, qubits can take on an intermediate state called a superposition, meaning they hold a value of 0 and 1 at the same time. Additionally, two qubits can be entangled, with their values linked as if they are one entity, despite sitting on opposite ends of a computer chip.

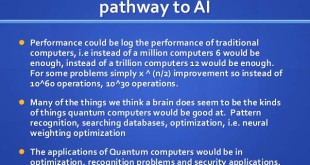

These unusual properties give quantum computers their game-changing method of calculation. Different possible solutions to a problem can be considered simultaneously, with the wrong answers canceling one another out and the right one being amplified. That allows the computer to quickly converge on the correct solution without needing to check each possibility individually.It turns out that this quantum-mechanical way of manipulating information gives quantum computers the ability to solve certain problems far more efficiently than any conceivable conventional computer.

One such problem is related to breaking secure codes, while another is searching large data sets. Quantum computers are naturally well-suited to simulating other quantum systems, which may help, for example, our understanding of complex molecules relevant to chemistry and biology. Quantum computers has many applications in military too like efficient decoding of cryptographic codes like RSA, AI / Pattern recognition tasks like discriminating between missile and decoy, Bioinfromatics like efficient analysis of new bioengineered threat using MCMC (Markov Chain Monte Carlo) methods.

Unlike binary bits of information in ordinary computers, “qubits” have property of superposition of states where quantum particles have some probability of being in each of two states, designated |0⟩ and |1⟩, at the same time. One of the main difficulties of quantum computation is that decoherence destroys the information in a superposition of states contained in a quantum computer, thus making long computations impossible. If a single atom that represents a qubit gets jostled, the information the qubit was storing is lost. Additionally, each step of a calculation has a significant chance of introducing error. As a result, for complex calculations, “the output will be garbage,” says quantum physicist Barbara Terhal of the research center QuTech in Delft, Netherlands.

In order to reach their full potential, today’s quantum computer prototypes have to meet specific criteria: First, they have to be made bigger, which means they need to consist of a considerably higher number of quantum bits. Quantum systems made from Quantum bits — or qubits — are inherently fragile: they constantly evolve in uncontrolled ways due to unwanted interactions with the environment, leading to errors in the computation. They are made from sensitive substances such as individual atoms, electrons trapped within tiny chunks of silicon called quantum dots, or small bits of superconducting material, which conducts electricity without resistance. Errors can creep in as qubits interact with their environment, potentially including electromagnetic fields, heat or stray atoms or molecules.

While competing technologies and competing architectures are attacking these problems, no existing hardware platform can maintain coherence and provide the robust error correction required for large-scale computation. A breakthrough is probably several years away. Answers are coming from intense investigation across a number of fronts, with researchers in industry, academia and the national laboratories pursuing a variety of methods for reducing errors. One approach is to guess what an error-free computation would look like based on the results of computations with various noise levels. A completely different approach, hybrid quantum-classical algorithms, runs only the most performance-critical sections of a program on a quantum computer, with the bulk of the program running on a more robust classical computer. These strategies and others are proving to be useful for dealing with the noisy environment of today’s quantum computers.

While classical computers are also affected by various sources of errors, these errors can be corrected with a modest amount of extra storage and logic. Quantum error correction schemes do exist but consume such a large number of qubits (quantum bits) that relatively few qubits remain for actual computation. That reduces the size of the computing task to a tiny fraction of what could run on defect-free hardware. Decoherence represents a challenge for the practical realization of quantum computers, since such machines are expected to rely heavily on the undisturbed evolution of quantum coherences. Simply put, they require that the coherence of states be preserved and that decoherence is managed, in order to actually perform quantum computation. The preservation of coherence, and mitigation of decoherence effects, are thus related to the concept of quantum error correction.

Different companies are addressing the challenge of decoherence from different angles, either to use more robust quantum processes or to find different ways to detect errors. Another direction scientists are focussing on is to figure out the best algorithms for present noisy quantm devices to perform meaningful tasks. DARPA is looking exploit quantum information processing before fully fault-tolerant quantum computers exist. Fault-tolerant means that if one part of the computer stops working properly, it can still continue to function without going completely haywire. On February 27, 2019 DARPA announced its Optimization with Noisy Intermediate-Scale Quantum devices (ONISQ) program. The principal objective of the ONISQ program is to demonstrate quantitative advantage of Quantum Information Processing (QIP) over the best classical methods for solving combinatorial optimization problems using Noisy Intermediate-Scale Quantum (NISQ) devices. In addition, the program will identify families of problem instances in combinatorial optimization where QIP is likely to have the biggest impact. “Here ‘intermediate scale’ refers to the size of quantum computers which will be available in the next few years, with a number of qubits ranging from 50 to a few hundred. Fifty qubits is a significant milestone, because that’s beyond what can be simulated by brute force using the most powerful existing digital supercomputers.”

Los Alamos National Laboratory is developing a method to invent and optimize algorithms that perform useful tasks on noisy quantum computers. The main idea is to reduce the number of gates in an attempt to finish execution before decoherence and other sources of errors have a chance to unacceptably reduce the likelihood of success. Because this approach yields shorter algorithms than the state of the art, they consequently reduce the effects of noise. This machine-learning approach can also compensate for errors in a manner specific to the algorithm and hardware platform. It might find, for instance, that one qubit is less noisy than another, so the algorithm preferentially uses better qubits. In that situation, the machine learning creates a general algorithm to compute the assigned task on that computer using the fewest computational resources and the fewest logic gates. Thus optimized, the algorithm can run longer.

The ability to hold a quantum state is called coherence. The longer the coherence time, the more operations researchers can perform in a quantum circuit before resetting it, and the more sophisticated the algorithms that can be run on it. To reduce errors, quantum computers need qubits that have long coherence times. And physicists need to be able to control quantum states more tightly, with simpler electrical or optical systems than are standard today. “There are probably about 20 suggested ways of creating qubits for quantum computers, with varying degrees of success,” says Clarina dela Cruz, science coordinator for the Quantum Materials Initiative at the Oak Ridge National Laboratory (ORNL).

At the recent American Chemical Society Spring 2019 National Meeting in Orlando, Florida, dela Cruz described how her team uses ORNL’s neutron source, the brightest in the world, to characterize quantum materials. Because so many existing and proposed qubits store information in spin states, she says, magnetism is the most important property to study. Neutron beams can be used as a kind of microscope for studying the magnetic properties of materials.

Discovering quantum materials requires physicists, chemists, and materials scientists to work together, dela Cruz says. Materials scientists search databases for structures that match physicists’ predicted quantum materials and then seek the advice of chemists. Most of the time, she says, the chemists say something like, “That cannot be stabilized—try another element here.” Once a potential quantum material is made, researchers can use neutron-scattering experiments to study its structure and how it responds to a range of temperatures, changing magnetic and electrical fields, and other parameters. To prove that they’ve made a qubit, researchers have to extensively characterize all the possible states of their devices and figure out how to switch them with a high level of control, she adds.

“Materials science is a huge component of increasing the performance of our circuits,” says Corey Rae McRae, a quantum physicist and postdoctoral researcher at the University of Colorado Boulder and the Boulder arm of the National Institute of Standards and Technology. McRae works on superconducting qubits, which are circuits incorporating multiple devices. First, they include simple devices made from aluminum or niobium—both of which are superconducting at cryogenic temperatures. These are integrated with electron-tunneling devices made by sandwiching the same metals around insulators. Superconducting qubits can be switched between two energy levels—representing 0 and 1—with microwave pulses.

Companies like IBM, Google and Rigetti Computing, use superconducting circuits cooled to temperatures close to absolute zero (-273.15 ºC). Other companies, such as IonQ, trap individual atoms in electromagnetic fields on a silicon chip in ultrahigh-vacuum chambers. All these strategies with a common goal: isolate the qubits in a controlled quantum state. Scientists handle these types of qubits with great care to eliminate all possible sources of noise. First, they are kept extremely cold in helium refrigerators like the ones in Rigetti’s labs. And engineers use only the most delicate microwave pulses to interact with them—they typically apply only a single photon, McRae says. At these low temperatures and photon levels, one of the noisiest things is the insulating material in the device itself. Typically made of aluminum oxide, the insulator can act like an antenna, absorbing microwave pulses rather than allowing them to transmit quantum states and, in effect, muddying the information encoded in those pulses.

McRae wants to minimize the loss of these microwave pulses. She is designing chips that blow up the qubit, separating all the parts on the same wafer so that losses can be measured from each one individually. Then their individual losses can be added up. McRae and others are also using similar methods to test alternative materials.

At Rigetti, Hong and other engineers are testing how slight changes in manufacturing affect the coherence times of the company’s superconducting qubits. She says making a good interface between the underlying silicon wafer and the device’s metal layer, usually aluminum, is a particular focus. “You can almost double the coherence time” by making clean interfaces, she says. The company is also honing the control electronics used to generate and carry microwave signals to individual qubits.

Scientists Just Found a Way to Make Quantum States Last 10,000 Times Longer

One of the major challenges in turning quantum technology from potential to reality is getting super-delicate quantum states to last longer than a few milliseconds – and scientists just raised the bar by a factor of about 10,000. They did it by tackling something called decoherence: that’s the disruption from surrounding noise caused by vibrations, fluctuations in temperature, and interference from electromagnetic fields that can very easily break a quantum state,

“With this approach, we don’t try to eliminate noise in the surroundings,” says quantum engineer Kevin Miao, from the University of Chicago. “Instead, we trick the system into thinking it doesn’t experience the noise.” By applying a continuous alternating magnetic field to a type of quantum system called a solid-state qubit, in addition to the standard electromagnetic pulses required to keep such a system under control, the team was able to ‘tune out’ unnecessary noise. The researchers compare it to sitting on a merry go round – the faster you go, the less able you are to hear the noise of your surroundings, as it all blurs into one. In this case spinning electrons are the merry go round.

Using the new approach, the solid-state qubit system was able to stay stable for 22 milliseconds – four orders of magnitude or 10,000 times longer compared with previous efforts, though still less than a tenth of a blink of an eye. The qubit is the quantum version of a standard computer bit, but instead of only being able to code 1s and 0s, it can achieve a state of superposition that makes it far more powerful.

Decoherence is something of a nemesis for quantum scientists. Other attempts to reduce background noise have looked at perfectly isolating quantum systems – which is technically very challenging – or using the purest possible materials to build them – which can quickly get expensive.

References and resources also include:

https://blogs.scientificamerican.com/observations/the-problem-with-quantum-computers/

International Defense Security & Technology Your trusted Source for News, Research and Analysis

International Defense Security & Technology Your trusted Source for News, Research and Analysis