Just as classical computers are unthinkable without memories, quantum memories will be essential elements for future quantum information processors. Quantum memories are devices that can store quantum information for a long time with very high fidelity and efficiency. Quantum memories are devices that can store the quantum state of a photon, without destroying the volatile quantum information carried by the photon. The quantum memory should be able to release a photon with the same quantum state as the stored photon, after a duration set by the user.

Quantum memories open manifold opportunities like high-speed quantum cryptography networks, large-scale quantum computers, and quantum simulators. Incorporating storage units for quantum information into quantum computers may allow researchers to build such devices with several orders of magnitude fewer qubits in their processors.

Far Fewer Qubits Required for “Quantum Memory” Quantum Computers reported in Sep 2021

So far, research on quantum memory—units for storing quantum information—has largely focused on its use in quantum communications and networks. Now, Élie Gouzien and Nicolas Sangouard of the French Alternative Energies and Atomic Energy Commission have investigated how quantum memory might be used in computations. The duo shows that a quantum computer architecture that incorporates a quantum memory could perform calculations with 3 orders of magnitude fewer qubits in its processor than standard architectures require, making the devices potentially easier to realize.

Researchers consider superconducting qubits one of the most promising technologies for building a quantum computer. But a challenge of using such qubits is that a large number are required for the standard superconducting-qubit-computer architecture, whose processor typically consists of a 2D grid of qubits in which computations are done using interactions of neighboring qubits.

In their work, Gouzien and Sangouard instead considered a 2D grid of qubits connected to a quantum memory that is organized in 3D. To compare this architecture to the standard one, they analyzed how it should perform the task of finding the prime factors for very large integers called RSA integers. They found that this quantum computer design with a quantum memory could factor a 2048-bit RSA integer with just 13,436 qubits, while the standard architecture, with no quantum memory, might require some twenty million qubits for this task.

The standard architecture is estimated to take just 8 hours for the factorization, whereas the quantum memory architecture would require 177 days. But Gouzien and Sangouard say that the approach is worth further investigation, as the substantially smaller number of qubits required makes the approach much more feasible in the near-term.

Random access quantum memory (RAQM)

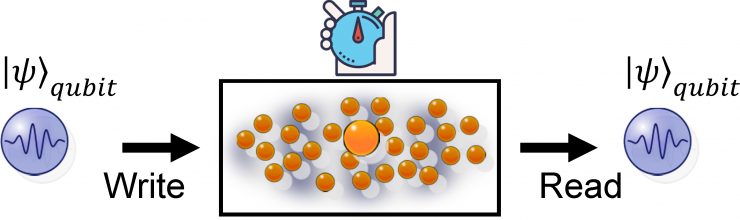

Classical random access memory, with its programmable access to many memory cells and site-independent access time, has found wide applications in information technologies. Similarly, for realization of quantum computational or communicational networks, it is desirable to have a random access quantum memory (RAQM) with the capability of storing many qubits, individual addressing of each qubit in the memory cell, and programmable write-in and read out of the qubit from the memory cell to a flying bus qubit with site-independent access time.

The write-in and read-out operations require implementation of a good quantum interface between the bus qubits, typically carried by the photonic pulses, and the memory qubits, which are usually realized with the atomic spin states. A good quantum interface should be able to faithfully map quantum states between the memory qubits and the bus qubits. A convenient implementation of the quantum interface is based on the directional coupling of an ensemble of atoms with the forward propagating signal photon pulse induced by the collective enhancement effect.

A number of experiments have demonstrated this kind of quantum interfaces and their applications both in the atomic ensemble and the solid-state spin ensemble with a low-temperature crystal.

To scale up the capability of a quantum memory, which is important for its application, an efficient method is to use the memory multiplexing, based on the use of multiple spatial modes, or temporal modes, or angular directions within a single atomic or solid-state ensemble. Through multiplexing of spatial modes, recent experiments have realized a dozen to hundreds of memory cells in a single atomic ensemble, however, write-in and read-out of external quantum signals have not been demonstrated yet.

With temporal multiplexing, a sequence of time-bin qubits have been stored into a solid-state ensemble, however, the whole pulse sequence needs to be read out together with a fixed order and interval between the pulses for lack of individual addressing. It remains a challenge to demonstrate a RAQM with programmable and on-demand control to write-in and read-out of each individual quantum signals stored into the memory cells.

In a Nature article of 2019, N. Jiang and others write,” We demonstrate a RAQM which can store 105 qubits in its 210 memory cells using the dual-rail representation of a qubit. A pair of memory cells stores the state of the input photonic qubit, which is carried by the two paths of a very weak coherent pulse. We have measured the fidelities and the efficiencies for the write-in, storage, and read-out operations for all the 105 pairs of memory cells. The fidelities, typically around or above 90%, are significantly higher than the classical bound and therefore confirm quantum storage. To demonstrate the key random access property, we show that different external optical qubits can be written into the multi-cell quantum memory, stored there simultaneously, and read out later on-demand by any desired order with the storage time individually controlled for each qubit. The fidelities for all the qubits still significantly exceed the classical bound with negligible crosstalk errors between them.”

Quantum Memory Management

Quantum memory management is critical to any quantum computer system where the quantum program (sequence of quantum logic gates and measurements) requires the bulk of resources from the system, e.g., demanding nearly all of the qubits available. This problem is pervasive in quantum compiling, since typical quantum algorithms require a significant number of qubits and gates, as they usually make use of a key component called quantum oracles, which implements heavy arithmetic with extensive usage of ancilla qubits (scratch memory).

Fred Chong is the Seymour Goodman Professor of Computer Architecture at the University of Chicago and others explain that much like a classical memory manager, the goal of a quantum memory manager is to allocate and free portions of quantum memory (qubits) for computation dynamically. But, unlike a classical memory manager, its quantum counterpart must respect a few characteristics of quantum memory, such as:

- Data has limited lifetime, as qubits can decohere spontaneously.

- Data cannot be copied in general, due to the quantum no-cloning theorem.

- Reading data (measurements) or incoherence noises on some qubits can permanently alter their data, as well as data on other qubits entangled with them.

- Data processing (computation) is performed directly in memory, and quantum logic gates can be reversed by applying their inverses.

- Data locality matters, as two-qubit gates are accomplished by interacting the operand qubits.

These characteristics of quantum memory profoundly influence the design of quantum computer architectures in general, and complicate the implementation of a memory manager.

One of the fundamental limitations of a quantum computer system is the inability to make copies of an arbitrary qubit. This is called the no-cloning theorem due to Wootters and Zurek. In classical computing, we are used to making shared state of data when designing and programming algorithms. The no-cloning limitation prevents us from directly implementing a quantum analog of the classical memory hierarchy, as caches require making copies of data.

Hence, current quantum computer architecture proposals follow the general principles that transformations are applied directly to quantum memory, and data in memory are moved but not copied. However, we are allowed to make an entangled copy of a qubit. This type of shared state has the special property that the state of one part of the memory system cannot be fully described without considering the other part(s). Measurements (Reads) on such systems typically result in highly correlated outcomes. As such, reads and writes on entangled states must be handled with care. In classical memory systems, reads and writes must follow models of cache coherence and memory consistency to ensure correctness on shared states. In a quantum system, we can no longer easily read from or write to the quantum memory, as read is done through measurements (which likely alter the data) and write generally requires complex state preparation routines.

Garbage Collection Done Strategically

Relying on programmers to keep track of all usage of qubits is hardly scalable nor efficient. So design automation plays a crucial role, in order to make programming manageable and algorithms practical. Several techniques have been developed to save the number of qubits used by quantum programs. The effectiveness of memory manager can impact the performance of programs significantly; a good memory manager fulfills the allocation requests of a high-level quantum circuit by locating and reusing qubits from a highly constrained pool of memory.

When a program requires new allocations of qubits, the memory manager faces a decision: whether to assign brand-new unused qubits or to reuse reclaimed qubits. It may seem that reusing reclaimed qubits whenever available is the most economical strategy, for it minimizes the number of qubits used by a program. However, qubit reuse could potentially reduce program parallelism. Operations that could have been performed in parallel are now forced to be scheduled after the last usage of the reclaimed qubits. This additional data dependency could potentially lengthen the overall time to complete the program. Hardware constraints, such as reliability and locality, can also impact the allocation decisions. Some qubits might be more reliable than the others. It could be beneficial to prioritize qubits that are more reliable and balance the workload on each qubit. Some qubits might be closer than the others. Multi-qubit operations performed on distant qubits can therefore induce communication overhead. Ideally, an efficient qubit allocator must make decisions based on program structures and hardware constraints and reuse qubits discretely.

References and Resources also include:

https://physics.aps.org/articles/v14/s117

https://www.sigarch.org/putting-qubits-to-work-quantum-memory-management/

International Defense Security & Technology Your trusted Source for News, Research and Analysis

International Defense Security & Technology Your trusted Source for News, Research and Analysis