The quest for quantum computing supremacy is a geopolitical priority for Europe, China, Canada, Australia and the United States. The quantum computing market was valued at $472m earlier in 2021 and is expected to reach $1.7bn by 2026, according to a Markets and Markets projection. Boston Consulting Group’s 2018 report that estimates a quantum computing market of nearing $60 billion in 2035, which would grow further to $295 billion in 2050, which explains why nations, corporates and startups alike are all jockeying for first position. Advantage gained by acquiring the first computer that renders all other computers obsolete would be enormous and bestow economic, military and public health advantages to the winner.

Unlike digital computer which understands only a bit that can have only one of two values: “1” or “0,” qubits have special property termed superposition, which can simultaneously be a 0 and/or a 1. This enables quantum computers to weed through millions of solutions all at once, while desktop PCs would have to consider them one at a time. Researchers are racing to develop universal quantum computer can be programmed to perform any computing task and will be exponentially faster than classical computers for a number of important applications for science and business. But current machines contain just a few dozen quantum bits, or qubits, too few to do anything dazzling.

The IBM Quantum team builds quantum processors—computer processors that rely on the mathematics of elementary particles in order to expand our computational capabilities, running quantum circuits rather than the logic circuits of digital computers. We represent data using the electronic quantum states of artificial atoms known as superconducting transmon qubits, which are connected and manipulated by sequences of microwave pulses in order to run these circuits. But qubits quickly forget their quantum states due to interaction with the outside world. The biggest challenge facing our team today is figuring out how to control large systems of these qubits for long enough, and with few enough errors, to run the complex quantum circuits required by future quantum applications.

IBM had planned a massive $3 billion research and development investment over the next 5 years into quantum computers, post-silicon era chips, neurosynaptic computers which mimic the behavior of living brains, carbon nanotubes, graphene tools and a variety of other technologies. IBM’s investment is one of the largest for quantum computing to date.

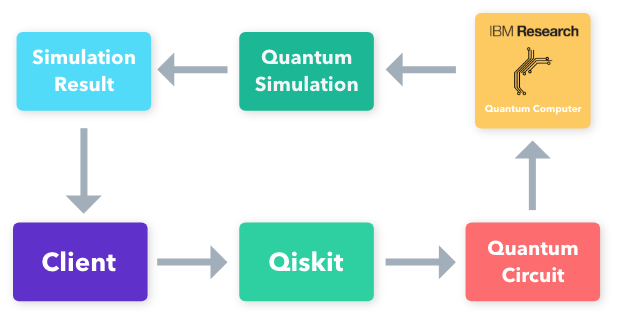

We put the first quantum computer on the cloud in 2016, and in 2017, we introduced an open source software development kit for programming these quantum computers, called Qiskit. Aside from their 50-qubit machine, IBM also has a 20-qubit quantum computing system that’s accessible to third-party users through their cloud computing platform. IBM managed to maintain the quantum state for both systems for a total of 90 microseconds, a record feat in the quantum world.

We debuted the first integrated quantum computer system, called the IBM Quantum System One, in 2019, then in 2020 we released our development roadmap showing how we planned to mature quantum computers into a commercial technology.

As part of that roadmap, in 2021 we released our IBM Quantum broke the 100‑qubit processor barrier in 2021 127-qubit IBM Quantum Eagle processor and launched Qiskit Runtime, a runtime environment of co-located classical systems and quantum systems built to support containerized execution of quantum circuits at speed and scale. In 2021, we demonstrated a 120x speedup in simulating molecules thanks to a host of improvements, including the ability to run quantum programs entirely on the cloud with Qiskit Runtime.120x speedup on a research-grade quantum workload. Earlier this year, we launched the Qiskit Runtime Services with primitives: pre-built programs that allow algorithm developers easy access to the outputs of quantum computations without requiring intricate understanding of the hardware.

IBM announced earlier that the U.S. Intelligence Advanced Research Projects Activity (IARPA) program has notified IBM that it will award its scientists a major multi-year research grant to advance the building blocks for a universal quantum computer. The spy agencies are now giving thrust to development of Quantum computers which can break this encryption used by terrorists.

IBM 2020 Quantum Roadmap

In Sep 2020, IBM announced its roadmap to reaching 1,000-plus qubits by 2023. It’s an aggressively ambitious mission, which recognizes that merely incremental increases in qubit counts and more sophisticated algorithms alone will not deliver Quantum Advantage—the point where certain information processing tasks can be performed more efficiently or cost effectively on a quantum computer, versus a classical one. The roadmap, announced at the annual IBM Quantum Summit, aims to take the technology from today’s noisy, small-scale devices to the million-plus qubit devices of the future. (A qubit is the basic unit of quantum information, analogous to the bits of classical computing.) Such progress is essential if quantum computers are to help industry and research organizations tackle some of the world’s biggest challenges, across industry, government and research.

“The 1,121-qubit Condor chip, is the inflection point for lower-noise qubits. By 2023, its physically smaller qubits, with on-chip isolators and signal amplifiers and multiple nodes, will have scaled to deliver the capability of Quantum Advantage,”Gargi Dasgupta, Director, IBM research in India said.

“We’re very excited,” says Prineha Narang, co-founder and chief technology officer of Aliro Quantum, a startup that specializes in code that helps higher level software efficiently run on different quantum computers. “We didn’t know the specific milestones and numbers that they’ve announced,” she says. The plan includes building intermediate-size machines of 127 and 433 qubits in 2021 and 2022, respectively, and envisions following up with a million-qubit machine at some unspecified date. Dario Gil, IBM’s director of research, says he is confident his team can keep to the schedule. “A road map is more than a plan and a PowerPoint presentation,” he says. “It’s execution.” Google has its own plan to build a million-qubit quantum computer within 10 years, as Hartmut Neven, who leads Google’s quantum computing effort, explained in an April interview, although he declined to reveal a specific timeline for advances.

Our team is developing a suite of scalable, increasingly larger and better processors, with a 1,000-plus qubit device, called IBM Quantum Condor, targeted for the end of 2023. In order to house even more massive devices beyond Condor, we’re developing a dilution refrigerator larger than any currently available commercially. This roadmap puts us on a course toward the future’s million-plus qubit processors thanks to industry-leading knowledge, multidisciplinary teams, and agile methodology improving every element of these systems. All the while, our hardware roadmap sits at the heart of a larger mission: to design a full-stack quantum computer deployed via the cloud that anyone around the world can program.

Prior to the launch, IBM will release the 433-qubit Osprey processor. It will also debut 121-qubit Eagle chip to reduce qubits errors and scale the number of qubits needed to reach Quantum Advantage. “The 1,121-qubit Condor chip, is the inflection point for lower-noise qubits. By 2023, its physically smaller qubits, with on-chip isolators and signal amplifiers and multiple nodes, will have scaled to deliver the capability of Quantum Advantage,” Dasgupta said.

A 1000-qubit machine is a particularly important milestone in the development of a full-fledged quantum computer, researchers say. Such a machine would still be 1000 times too small to fulfill quantum computing’s full potential—such as breaking current internet encryption schemes—but it would big enough to spot and correct the myriad errors that ordinarily plague the finicky quantum bits.

With their planned 1121-qubit machine, IBM researchers would be able to maintain a handful of logical qubits and make them interact, says Jay Gambetta, a physicist who leads IBM’s quantum computing efforts. That’s exactly what will be required to start to make a full-fledged quantum computer with thousands of logical qubits. Such a machine would mark an “inflection point” in which researchers’ focus would switch from beating down the error rate in the individual qubits to optimizing the architecture and performance of the entire system, Gambetta says.

IBM’s declared timeline comes with an obvious risk that everyone will know if it misses its milestones. But the company decided to reveal its plans so that its clients and collaborators would know what to expect. Dozens of quantum-computing startup companies use IBM’s current machines to develop their own software products, and knowing IBM’s milestones should help developers better tailor their efforts to the hardware, Gil says.

Users have run more than 300,000 quantum experiments on the IBM Cloud. Researchers at Google have developed a similar device, although have not made it accessible to the public. Both of these computers use superconducting qubits built using techniques from the conventional computer chip industry.

We are proud of our work. Today, we maintain more than two dozen stable systems on the IBM Cloud for our clients and the general public to experiment on, including our 5-qubit IBM Quantum Canary processors and our 27-qubit IBM Quantum Falcon processors—on one of which we recently ran a long enough quantum circuit to declare a Quantum Volume of 64. This achievement wasn’t a matter of building more qubits; instead, we incorporated improvements to the compiler, refined the calibration of the two-qubit gates, and issued upgrades to the noise handling and readout based on tweaks to the microwave pulses. Underlying all of that is hardware with world-leading device metrics fabricated with unique processes to allow for reliable yield.

Simultaneous to our efforts to improve our smaller devices, we are also incorporating the many lessons learned into an aggressive roadmap for scaling to larger systems. In fact, this month we quietly released our 65-qubit IBM Quantum Hummingbird processor to our IBM Q Network members. This device features 8:1 readout multiplexing, meaning we combine readout signals from eight qubits into one, reducing the total amount of wiring and components required for readout and improving our ability to scale, while preserving all of the high performance features from the Falcon generation of processors. We have significantly reduced the signal processing latency time in the associated control system in preparation for upcoming feedback and feed-forward system capabilities, where we’ll be able to control qubits based on classical conditions while the quantum circuit runs.

In 2021, IBM will debut the 127-qubit “Eagle” chip.

Next year, we’ll debut our 127-qubit IBM Quantum Eagle processor. Eagle features several upgrades in order to surpass the 100-qubit milestone: crucially, through-silicon vias (TSVs) and multi-level wiring provide the ability to effectively fan-out a large density of classical control signals while protecting the qubits in a separated layer in order to maintain high coherence times. Meanwhile, we’ve struck a delicate balance of connectivity and reduction of crosstalk error with our fixed-frequency approach to two-qubit gates and hexagonal qubit arrangement introduced by Falcon. This qubit layout will allow us to implement the “heavy-hexagonal” error-correcting code that our team debuted last year, so as we scale up the number of physical qubits, we will also be able to explore how they’ll work together as error-corrected logical qubits—every processor we design has fault tolerance considerations taken into account.

Eagle will be followed by the 433-qubit “Osprey” processor in 2022

With the Eagle processor, we will also introduce concurrent real-time classical compute capabilities that will allow for execution of a broader family of quantum circuits and codes. The design principles established for our smaller processors will set us on a course to release a 433-qubit IBM Quantum Osprey system in 2022. More efficient and denser controls and cryogenic infrastructure will ensure that scaling up our processors doesn’t sacrifice the performance of our individual qubits, introduce further sources of noise, or take up too large a footprint.

In 2023, we will debut the 1,121-qubit IBM Quantum Condor processor

In 2023, we will debut the 1,121-qubit IBM Quantum Condor processor, incorporating the lessons learned from previous processors while continuing to lower the critical two-qubit errors so that we can run longer quantum circuits. We think of Condor as an inflection point, a milestone that marks our ability to implement error correction and scale up our devices, while simultaneously complex enough to explore potential Quantum Advantages—problems that we can solve more efficiently on a quantum computer than on the world’s best supercomputers.

The development required to build Condor will have solved some of the most pressing challenges in the way of scaling up a quantum computer. However, as we explore realms even further beyond the thousand qubit mark, today’s commercial dilution refrigerators will no longer be capable of effectively cooling and isolating such potentially large, complex devices.

That’s why we’re also introducing a 10-foot-tall and 6-foot-wide “super-fridge,” internally codenamed “Goldeneye,” a dilution refrigerator larger than any commercially available today. Our team has designed this behemoth with a million-qubit system in mind—and has already begun fundamental feasibility tests. Ultimately, we envision a future where quantum interconnects link dilution refrigerators each holding a million qubits like the intranet links supercomputing processors, creating a massively parallel quantum computer capable of changing the world.

IBM’s 2022 Roadmap

IBM’s most recent development roadmap predicts the company will scale its quantum technology to more than 1,000 qubits and operate parallelized quantum processors next year. The following year introduces error suppression and mitigation techniques, which IBM says lays the groundwork for quantum error correction: one of the major keys to unlocking quantum computing’s full potential.

Quantum-centric supercomputers

We aren’t just thinking about quantum computers, though. We’re trying to induce a paradigm shift in computing overall. For many years, CPU-centric supercomputers were society’s processing workhorse, with IBM serving as a key developer of these systems. In the last few years, we’ve seen the emergence of AI-centric supercomputers, where CPUs and GPUs work together in giant systems to tackle AI-heavy workloads.

Now, IBM is ushering in the age of the quantum-centric supercomputer, where quantum resources — QPUs — will be woven together with CPUs and GPUs into a compute fabric. Our goal is to build quantum-centric supercomputers. The quantum-centric supercomputer will incorporate quantum processors, classical processors, quantum communication networks, and classical networks, all working together to completely transform how we compute.

We think that the quantum-centric supercomputer will serve as an essential technology for those solving the toughest problems, those doing the most ground-breaking research, and those developing the most cutting-edge technology.

In order to do so, we need to solve the challenge of scaling quantum processors, develop a runtime environment for providing quantum calculations with increased speed and quality, and introduce a serverless programming model to allow quantum and classical processors to work together frictionlessly.

Focusing on an arrival date for quantum computing could even be dangerous, both from the standpoint of missed business opportunities and potential security threats. Michael Osborne, who leads security and privacy activities at IBM’s research center in Switzerland, says the public “cannot afford to risk not being ready” for quantum advances.

“You can’t wait until such a machine is here, you have to be preparing way ahead for when these things advance,” said Osborne. “For organizations where quantum computers are going to have an impact, whether good or bad, that journey starts now because we can’t predict when there’s going to be a major breakthrough in certain forms of hybrid memory, which is really going to advance how quickly large machines are available.”

Furthermore, these executives say, it will take years for researchers to tackle multiple challenges that will allow quantum technology to become commercially viable. The industry has yet to reach consensus on even basic questions about quantum computing, such as which materials are best suited for quantum chips. Imec, for example, relies on silicon in its CMOS-compatible fabrication technique, which limits defects that can cause qubits to lose energy. Researchers at the U.S. Department of Energy-affiliated Fermilab, on the other hand, say silicon decreases the lifespan of qubits through a process known as quantum decoherence, and thus may be less appropriate for quantum chips than sapphire or another material.

IBM Quantum Technology Advancements

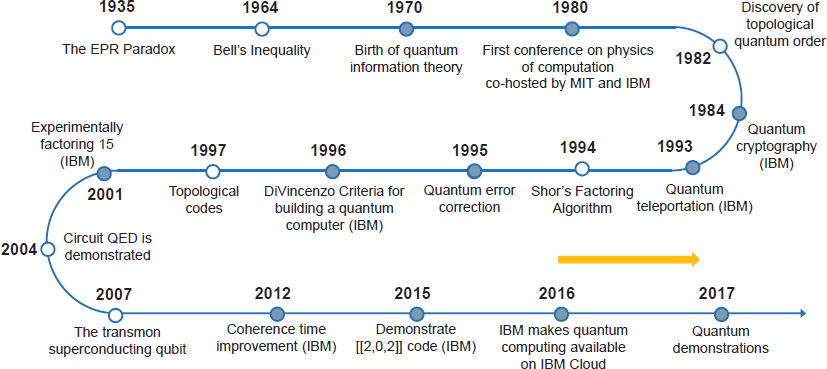

IBM has been exploring superconducting qubits since the mid-2000s, increasing coherence times and decreasing errors to enable multi-qubit devices in the early 2010s. Continued refinements and advances at every level of the system from the qubits to the compiler allowed us to put the first quantum computer in the cloud in 2016. IBM opened public access to its quantum processors to serve as an enablement tool for scientific research, a resource for university classrooms, and a catalyst of enthusiasm for the field. The quantum processor offered was composed of five superconducting qubits and housed at the IBM T.J. Watson Research Center in New York.

Challenges for Building Practical large scale programmable Quantum computers

To maximise the potential of quantum computers, the industry must solve challenges from the cryogenics, production and effects materials at very low temperatures. This is one of the reasons why IBM built its super-fridge to house Condor, IBM Research’s Director Gargi Dasgupta xplained. Quantum processors require special conditions to operate, and they must be kept at near-absolute zero, like IBM’s quantum chips are kept at 15mK. The deep complexity and the need for specialised cryogenics is why at least IBM’s quantum computers are accessible via the cloud, and will be for the foreseeable future, Dasgupta, who is also IBM’s CTO for South Asia region, noted.

IBM is already preparing a jumbo liquid-helium refrigerator, or cryostat, to hold a quantum computer with 1 million qubits. The IBM road map doesn’t specify when such a machine could be built. But if company researchers really can build a 1000-qubit computer in the next 2 years, that ultimate goal will sound far less fantastical than it does now.

The long-term goal is what’s called a fault tolerant quantum computer, one that uses error correction to keep calculations humming even when individual qubits, the data processing element at the heart of quantum computers, are perturbed. Unlike classical bits that are subject to only digital bit-flip errors, quantum bits are susceptible to a much larger spectrum of errors, for which any complete quantum error-correcting code must account. Quantum information is very fragile because all existing qubit technologies lose their information when interacting with matter and electromagnetic radiation. Theorists have found ways to preserve the information much longer by spreading information across many physical qubits.

Detecting quantum errors

The ability to detect and deal with errors when manipulating quantum systems is a fundamental requirement for fault-tolerant quantum computing. Unlike classical computer, which is based on binary bit and can have only two values, quantum computer is based on quantum bit (Qubit) that can hold a value of 1 or 0 as well as both values at the same time, described as superposition and simply denoted as “0+1”. This superposition property is what allows quantum computers to test every possible solution simultaneously and choose the correct solution amongst millions of possibilities in a time much faster than a conventional computer. The sign of this superposition is important because both states 0 and 1 have a phase relationship to each other.

One of the great challenges for scientists seeking to harness the power of quantum computing is controlling or removing quantum decoherence – the creation of errors in calculations caused by interference from factors such as heat, electromagnetic radiation, and material defects. The errors are especially acute in quantum machines, since quantum information is so fragile.

Two types of errors can occur on such a superposition state. One is called a bit-flip error, which simply flips a 0 to a 1 and vice versa. Quantum bit (Qubit) is also vulnerable to phase-flip errors, which flips the sign of the phase relationship between 0 and 1 in a superposition state.

Classical error correction employs redundancy for instance by storing the information multiple times, and—if these copies are later found to disagree—just take a majority vote; Copying quantum information is not possible due to the no-cloning theorem. But it is possible to spread the information of one qubit onto a highly entangled state of several (physical) qubits, even hundreds or thousands of them.

Quantum error correction is a critical requirement for building a practical and reliable large-scale quantum computer. Determining the joint quantum information in the code qubits is an essential step for quantum error correction because directly measuring the code qubits destroys the information contained within them.

One of the error correction schemes is “Surface code” which spreads quantum information across many qubits. It allows for only nearest neighbor interactions to encode one logical qubit, making it sufficiently stable to perform error-free operations. “Up until now, researchers have been able to detect bit-flip or phase-flip quantum errors, but never the two together. Previous work in this area, using linear arrangements, only looked at bit-flip errors offering incomplete information on the quantum state of a system and making them inadequate for a quantum computer,” said Jay Gambetta, a manager in the IBM Quantum Computing Group.

Scalable design

“Our four qubit results take us past this hurdle by detecting both types of quantum errors and can be scalable to larger systems, as the qubits are arranged in a square lattice as opposed to a linear array.” IBM’s novel, quantum bit circuit design, based on a square lattice of four supercooled, superconducting qubits on a chip roughly one-quarter-inch square shows the best potential to scale to much larger dimensions.

Because these qubits can be designed and manufactured using standard silicon fabrication techniques, IBM anticipates that once a handful of superconducting qubits can be manufactured reliably and repeatedly, and controlled with low error rates, there will be no fundamental obstacle to demonstrating error correction in larger lattices of qubits. The work at IBM was funded in part by the IARPA (Intelligence Advanced Research Projects Activity) multi-qubit-coherent-operations program.

Packing challenge

“What we’ve done thus far is to demonstrate some of the concepts of error correction and detection,” says Jerry Chow at IBM’s Thomas J. Watson Research Center in Yorktown Heights, New York. “What we’re doing with this programme is aiming for larger system sizes which permit the ability to encode a logical qubit.”

Chow says they need around 20 physical qubits to create one logical qubit, but packing the qubits close together will be tricky. “When you put many of them together, you don’t know that they are going to work the same way as when you just have one,” he says. “How you properly engineer this larger chip is going to be a big challenge.

LogiQ envisions that program success will require a multi-disciplinary approach to come up with new technical solutions that will better deal with the fragility of quantum information due to system imperfections, errors and environmental influences.

Over the next three years, IBM’s multidisciplinary team of scientists will work alongside academia and industry to help solve the challenges of fabrication, cryogenics and electronics, as well as improve software capabilities, such as error-correction coding. Knowing the way forward doesn’t remove the obstacles; we face some of the biggest challenges in the history of technological progress. But, with our clear vision, a fault-tolerant quantum computer now feels like an achievable goal within the coming decade.

IBM’s quantum computing processor advancements

Earlier IBM-developed processors include:

A 16 qubit processor that will allow for more complex experimentation than the previously available 5 qubit processor. It is freely accessible for developers, programmers and researchers to run quantum algorithms and experiments, work with individual quantum bits, and explore tutorials and simulations. Beta access is available through a new Software Development Kit available on GitHub https://github.com/IBM/qiskit-sdk-py.

IBM’s first prototype commercial processor with 17 qubits and leverages significant materials, device, and architecture improvements to make it the most powerful quantum processor created to date by IBM. It has been engineered to be at least twice as powerful as what is available today to the public on the IBM Cloud and it will be the basis for the first IBM Q early-access commercial systems.

“The significant engineering improvements announced today will allow IBM to scale future processors to include 50 or more qubits, and demonstrate computational capabilities beyond today’s classical computing systems,” said Arvind Krishna, senior vice president and director of IBM Research and Hybrid Cloud. “These powerful upgrades to our quantum systems, delivered via the IBM Cloud, allow us to imagine new applications and new frontiers for discovery that are virtually unattainable using classical computers alone.”

IBM has adopted a new metric to characterize the computational power of quantum systems: Quantum Volume. Quantum Volume accounts for the number and quality of qubits, circuit connectivity, and error rates of operations. IBM’s prototype commercial processor offers a significant improvement in the Quantum Volume. Over the next few years, IBM plans to continue to push the technology aggressively and aims to significantly increase the Quantum Volume of future systems by improving all aspects of the processors, including incorporating 50 or more qubits. In contrast to early quantum computers that cold run only single quantum algorithm, IBM’s computer is programmable just like a regular PC though it can only handle relatively small problems.

Of many viable approaches to quantum computation, IBM has chosen superconducting qubits that can utilize the microfabrication techniques of silicon and amenable for scaling up.

In 2014, Scientists at the IBM research used 3D qubit to extend the quantum state up to 100 microseconds. This amount of time is sufficient to employ error correction algorithms to correct for errors in computation. The 3D superconducting qubit is about 1 millimeter in length and suspended in the center of a cavity on a sapphire chip. Performance is measured by passing microwave signals to the device’s connectors. IBM officials said that the design team is confident that it can scale up the system to hundreds of thousands of qubits.

In 2015, IBM scientists unveiled two critical advances towards the realization of a practical quantum computer. For the first time, they showed the ability to detect and measure both kinds of quantum errors simultaneously, as well as demonstrated a new, square quantum bit circuit design that is the only physical architecture that could successfully scale to larger dimensions.

Q-CTRL develops software to optimize performance of individual bits

One company joining those efforts is Q-CTRL, which develops software to optimize the control and performance of the individual qubits. The IBM announcement shows venture capitalists the company is serious about developing the challenging technology, says Michael Biercuk, founder and CEO of Q-CTRL. “It’s relevant to convincing investors that this large hardware manufacturer is pushing hard on this and investing significant resources,” he says.

IBM’s quantum roadmap provides unprecedented insights into the future of quantum computing, including how the technology will develop over the short and longer terms. In making its roadmap public, IBM is committing itself to meet a series of aggressive benchmarks that will help the company maintain its leadership in quantum computing and place its clients on the path to groundbreaking achievements.

https://www.youtube.com/watch?v=LSA3pYZtRGg

IBM Q System One™

IBM Q systems are designed to one day tackle problems that are currently seen as too complex and exponential in nature for classical systems to handle. Future applications of quantum computing may include finding new ways to model financial data and isolating key global risk factors to make better investments, or finding the optimal path across global systems for ultra-efficient logistics and optimizing fleet operations for deliveries.

Designed by IBM scientists, systems engineers and industrial designers, IBM Q System One has a sophisticated, modular and compact design optimized for stability, reliability and continuous commercial use. For the first time ever, IBM Q System One enables universal approximate superconducting quantum computers to operate beyond the confines of the research lab

Much as classical computers combine multiple components into an integrated architecture optimized to work together, IBM is applying the same approach to quantum computing with the first integrated universal quantum computing system. IBM Q System One is comprised of a number of custom components that work together to serve as the most advanced cloud-based quantum computing program available, including:

- Quantum hardware designed to be stable and auto-calibrated to give repeatable and predictable high-quality qubits;

- Cryogenic engineering that delivers a continuous cold and isolated quantum environment;

- High precision electronics in compact form factors to tightly control large numbers of qubits;

- Quantum firmware to manage the system health and enable system upgrades without downtime for users; and

- Classical computation to provide secure cloud access and hybrid execution of quantum algorithms.

The design of IBM Q System One includes a nine-foot-tall, nine-foot-wide case of half-inch thick borosilicate glass forming a sealed, airtight enclosure that opens effortlessly using “roto-translation,” a motor-driven rotation around two displaced axes engineered to simplify the system’s maintenance and upgrade process while minimizing downtime – another innovative trait that makes the IBM Q System One suited to reliable commercial use.

A series of independent aluminum and steel frames unify, but also decouple the system’s cryostat, control electronics, and exterior casing, helping to avoid potential vibration interference that leads to “phase jitter” and qubit decoherence.

The IBM Q System One is a major step forward in the commercialization of quantum computing,” said Arvind Krishna, senior vice president of Hybrid Cloud and director of IBM Research. “This new system is critical in expanding quantum computing beyond the walls of the research lab as we work to develop practical quantum applications for business and science.”

This new system marks the next evolution of IBM Q, the industry’s first effort to introduce the public to programmable universal quantum computing through the cloud-based IBM Q Experience, and the commercial IBM Q Network platform for business and science applications. The free and publicly available IBM Q Experience has been continuously operating since May of 2016 and now boasts more than 100,000 users, who have run more than 6.7 million experiments and published more than 130 third-party research papers. Developers have also downloaded Qiskit, a full-stack, open-source quantum software development kit, more than 140,000 times to create and run quantum computing programs. The IBM Q Network includes the recent additions of Argonne National Laboratory, CERN, ExxonMobil, Fermilab, and Lawrence Berkeley National Laboratory.

IBM’s recent patents

IBM secured 9,130 US patents in 2020, more than any other company as measured by an annual ranking, and this year quantum computing showed up as part of Big Blue’s research effort. Patent No. 10,622,536 governs different lattices in which IBM lays out its qubits. Today’s 27-qubit “Falcon” quantum computers use this approach, as do the newer 65-qubit “Hummingbird” machines and the much more powerful 1,121-qubit “Condor” systems due in 2023.

IBM’s lattices are designed to minimize “crosstalk,” in which a control signal for one qubit ends up influencing others, too. That’s key to IBM’s ability to manufacture working quantum processors and will become more important as qubit counts increase, letting quantum computers tackle harder problems and incorporate error correction, Chow said.

For quantum computers to be widely used, and more importantly, have a positive impact, it is imperative to build programmable quantum computing systems that can implement a wide range of algorithms and programmes. Quantum computing is expanding to multiple industries such as banking, capital markets, insurance, automotive, aerospace, and energy. “In years to come, the breadth and depth of the industries leveraging quantum will continue to grow,” Dasgupta noted.

Industries that depend on advances in materials science will start to investigate quantum computing. For instance, Mitsubishi and ExxonMobil are using quantum technology to develop more accurate chemistry simulation techniques in energy technologies. Additionally, Dasgupta said carmaker Daimler is working with IBM scientists to explore how quantum computing can be used to advance the next generation of EV batteries. Exponential problems, like those found in molecular simulation in chemistry, and optimisation in finance, as well as machine learning continue to remain intractable for classical computers.

Patent No. 10,810,665 governs a higher-level quantum computing application for assessing risk — a key part of financial services companies figuring out how to invest money. The more complex the options being judged, the slower the computation, but the IBM approach still outpaces classical computers.

Patent No. 10,599,989 describes a way of speeding up some molecular simulations, a key potential promise of quantum computers, by finding symmetries in molecules that can reduce computational complexity.

References and Resources also include:

http://www.nature.com/ncomms/2015/150429/ncomms7979/full/ncomms7979.html

http://arstechnica.com/science/2016/05/how-ibms-new-five-qubit-universal-quantum-computer-works/

https://www-03.ibm.com/press/us/en/pressrelease/44357.wss

https://futurism.com/ibm-announced-50-qubit-quantum-computer/

https://research.ibm.com/blog/ibm-quantum-roadmap-2025

https://www.ibm.com/blogs/research/2020/09/ibm-quantum-roadmap/

International Defense Security & Technology Your trusted Source for News, Research and Analysis

International Defense Security & Technology Your trusted Source for News, Research and Analysis